The first substantial profile on how Palantir and the Pentagon are actually using AI in warfare, with a closer look at Palantir’s unique approach to problem-solving, which unlocked targeting software the military has wanted since Vietnam

Details on what may have caused the accidental bombing of an Iranian school

New details on the fight between the Pentagon and Anthropic, Palantir’s former AI partner, concerning an update that crippled the CDC’s AI systems and heightened the administration’s fears over who controls the tech

With sourcing via military case studies, private and public demos of Palantir’s tech, and interviews with Palantir’s Akshay Krishnaswamy, chief architect; Ted Mabrey, head of commercial business; various vertical leaders and engineers at Palantir; Emil Michael, the under secretary of war for research and engineering; as well as sources who spoke on the condition of anonymity.

In 2017, the Department of War launched “Project Maven.”

It began with a simple question, Cameron Stanley, the Pentagon’s chief digital and AI officer, said from the stage of Palantir’s AI conference last month: “What does the third offset look like?”

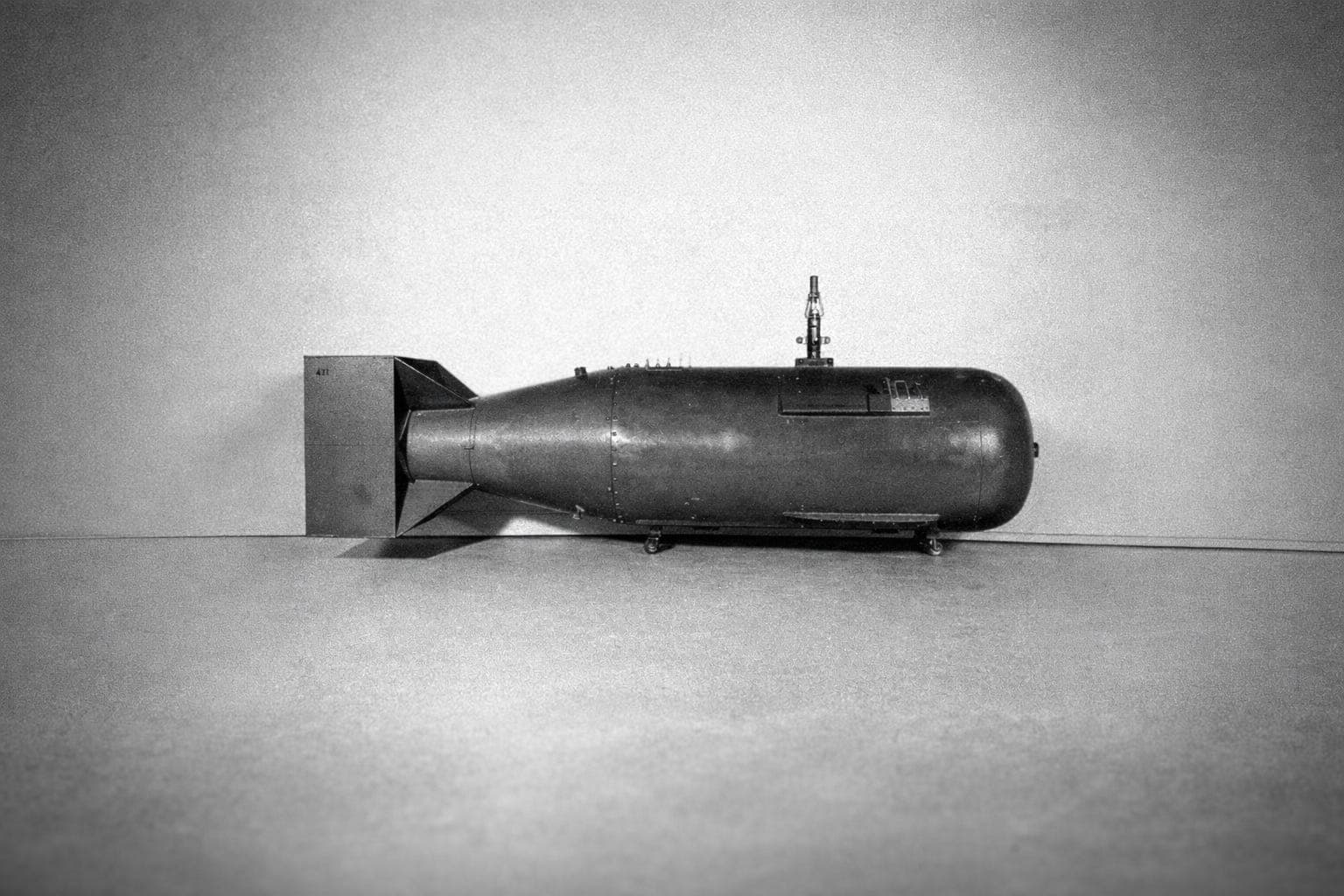

An “offset” is an edge: when adversaries have more people or equipment, you offset their advantage with a breakthrough technology. The first offset was nuclear weapons. The second was stealth aircraft and precision-guided munitions. The third is AI and autonomy.

Or, that was the bet behind Project Maven. Instead of soldiers staring at drone footage for 16 hours per day — “They get lazy, they get tired, they get distracted, they miss things,” Stanley said — a team was established to get AI to detect fishy stuff, then trigger the next step.

“The hypothesis was: if we got AI in the hands of the warfighter, it would work,” he said.

It didn’t work. Not because the computer vision models couldn’t detect cars and people. They could. But because the military processes — involving whiteboards, PowerPoint, pieces of paper, chat, and email — couldn’t accommodate the AI.

“And so we rejected the hypothesis that getting the AI in the hands of the warfighter was the right answer,” Stanley said. “What we really needed to do was take three steps back and say, ‘The real issue isn’t AI. The real issue is workflow. How are we making decisions?’”

TMI

To understand the military’s predicament, you have to understand two things.