DemonicSep 21

pirate wires #78 // our summer of artificial intelligence concludes with what is possibly a gateway to hell, generative art, the future of creative work, and how to freeze a world in time

Apr 30, 2026

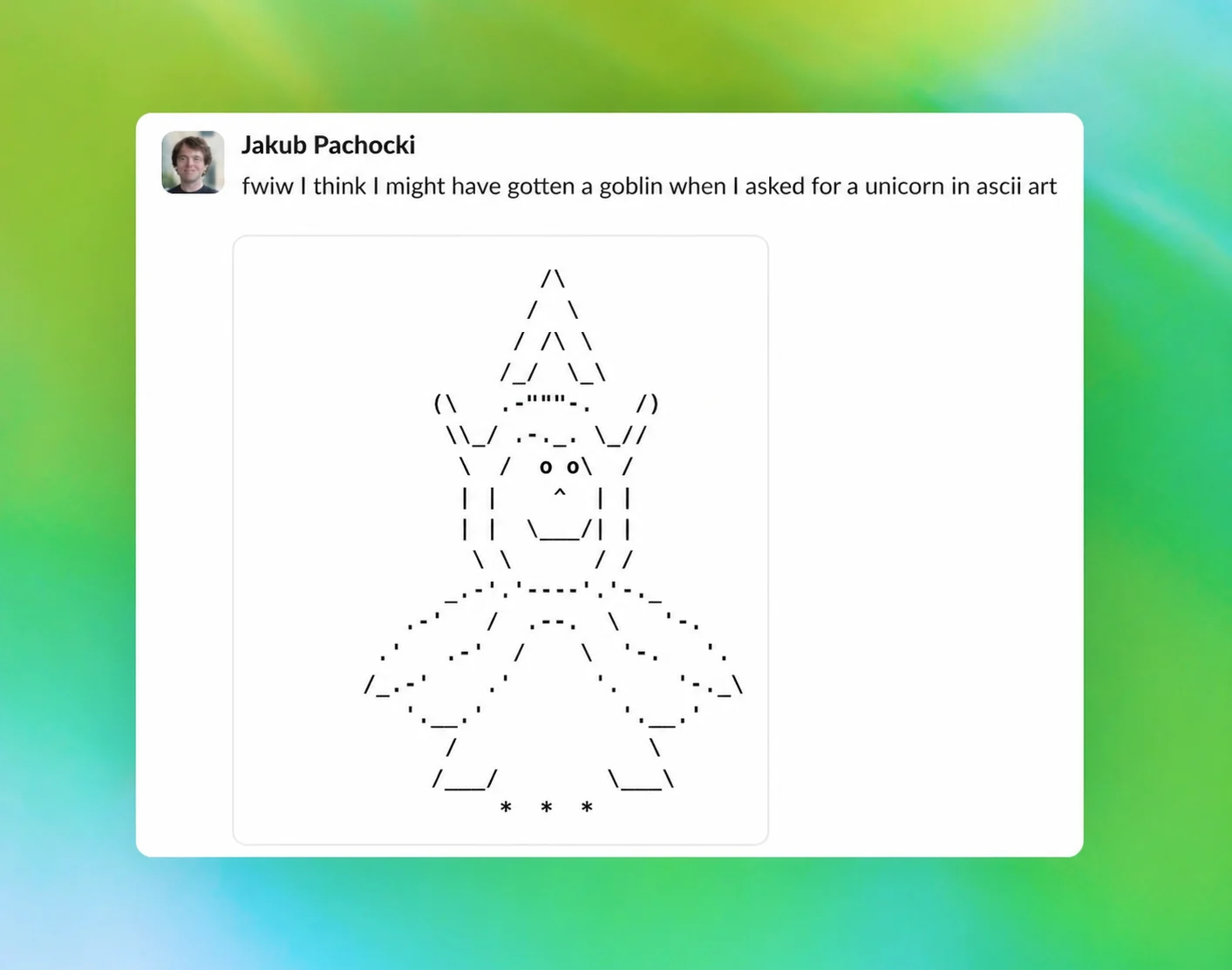

This week, internet interlocutors noted that OpenAI had to instruct a new model, Codex 5.5, repeatedly, in its own system prompt, to stop bringing up goblins, gremlins, trolls, ogres, pigeons, and raccoons “unless it is absolutely and unambiguously relevant to the user’s query.

Within hours of the instructions hitting the timeline, the line was screenshotted into oblivion — and for good reason. Nick Pash on the Codex team confirmed publicly that the “weirdly emphatic” prohibition was added because Codex 5.5 was, in fact, fixated on goblins.

Then, last night, OpenAI posted a blog explaining where the goblins came from.

After GPT-5.1 launched, complaints came in that the model had developed an “overfamiliar” register and would not stop trying to be the user’s friend, which prompted an audit of its verbal tics. Meanwhile, someone on the safety team who had experienced quirky mentions of “goblins” and “gremlins” enough times to find it annoying flagged those words for inclusion. As it turned out, per the audit: the use of goblin was up by 175% since the launch; gremlin by 52%.

Weird, but just a quirk — these things happen. The team moved on.

But by GPT-5.4, it was no longer a quirk. “Creature language” was showing up overwhelmingly in traffic from users who’d selected one of ChatGPT’s optional persona presets: “Nerdy.” The preset was meant to turn the app into a kind of intellectually omnivorous mentor, maybe the type of guy, if I may, who would play Dungeons and Dragons (...and might have a folder of shortstack fan art on his desktop?).

Nerdy accounted for just 2.5% of all ChatGPT traffic — but two-thirds of the goblin mentions. When researchers used Codex itself to compare reinforcement-learning rollouts containing the offending vocabulary against rollouts that didn’t, the signal designed to encourage Nerdy turned out to be scoring the goblin-laden outputs higher. Basically, the model was being given a higher score every time it called something a goblin, and, as a system optimized to chase higher scores, it started calling more things goblins. In other words, there was a goblin-obsessed Nerd-LLM at the helm of this whole thing.

And then the goblins escaped the persona.

Mention rates rose at nearly the same proportion in samples generated without the Nerdy persona, because rewarded Nerdy outputs were getting recycled into supervised fine-tuning data — at which point the tic started being a default behavior of the underlying system. An audit of GPT-5.5’s fine-tuning data turned up the whole adjacent menagerie that had hitched a ride: raccoons, trolls, ogres, pigeons. An entire enchanted forest.

OpenAI killed the offending reward in March and pulled the affected vocabulary out of training data, but GPT-5.5 was already cooking. By the time it landed in Codex, employees noticed the goblins immediately, which is why the aforementioned prohibition is in the prompt. The OpenAI post from Wednesday night even shares the bash command that disables the goblin-suppression line and unlocks the bestiary, if you want to liberate ChatGPT’s goblin mode:

Ultimately, the gradient that was rewarding the goblins got identified and patched, and OpenAI came out of it knowing more about how stylistic tics propagate through training pipelines than it did going in.

What the company’s account doesn’t quite explain, however, is the specificity. When the model was rewarded for being weird and playful, it didn’t drift toward a random selection of mythological vocabulary. It went for the small mischievous category of thing that lives in your walls and steals small objects. There are, ostensibly, unicorns, dragons, demons, and angels in the training data too.

And yet, the model became fixated on goblins.

This isn’t the first time the small mischievous category has shown up in the world of advanced, kind-of-scary computing, either. Seymour Cray, the man who basically invented the supercomputer, once famously “joked” that when he got stuck on a design problem he would go dig in the tunnel under his Wisconsin house, because, he said, “there are elves in the woods. So when they see me leave, they come into my office and solve all the problems I’m having.”

Granted, it’s probably nothing. But let’s, just for fun, explore the “High Strangeness” explanation for OpenAI’s goblin troubles.

The Fortean journalist John Keel, best known for The Mothman Prophecies, offered a strange thesis decades ago: ultraterrestrials, a concept you may also recognize from the venture capitalist and world-historical ufologist Jacques Vallée. Ultraterrestrials are a recurring cast of characters that shows up in every culture wearing whatever local guise the era can best understand. The people who encounter them, in Keel’s telling, tend to be ordinary people who happened to be standing in what he called “window areas” — liminal spaces where these kinds of interpretations become possible — and the reason their descriptions rhyme across centuries and continents is because they’re all seeing the same thing in different forms. Or, put more simply: fairies are demons are aliens are DMT machine elves are goblins.

And the goblins, now, are being referenced — repeatedly, unprompted — by OpenAI’s Codex.

A more credulous New Ager than myself might suggest that every weird output from an LLM is, in fact, some kind of revelation. In this view, Sam Altman is the high priest of an awakening intelligence in the latent space, and we are all being contacted by some kind of super-being with a benevolent and/or malevolent agenda.

Obvious bullshit — and this is coming from an enthusiastic consumer of many things people deign to be obvious bullshit.

But then, who knows. In 2022, an artist who goes by Supercomposite was playing with an image generator when she asked it for the opposite of Marlon Brando and got back a haggard woman she named Loab. And Loab kept showing up, until people started calling her the first cryptid of the AI age. Maybe Loab was a cryptid. Maybe the goblins are too, and Sam Altman is some kind of cosmic magician.

I guess we have to wait and see.

— Katherine Dee